Certified Hadoop Developers

Lines of Code Written

Successful Projects

Years’ Average Experience

Unsure about the best-suited Hadoop implementation strategy for your business? Get expert consultation from VE’s Hadoop certified developers.

Hire Hadoop certified developers from India to enable a seamless transition of your existing frameworks and platforms through Hadoop migration support.

VE’s Hadoop application developers from India can deliver continuous support and maintenance for your vital business processes to improve their functionality.

Hire Hadoop developers from India who can conduct a health check of your system by reviewing the data cluster and submitting a comprehensive report.

Kick-start your project straightaway with our popular No-Obligation, No-Payment, 1-Week Free Trial. Continue with the same resource if satisfied.

VE’s free, bespoke recruitment support helps you go from searching to hiring literally within days, if not hours. No more long waiting periods and expensive local recruitment fees for just one hire.

Get your own ‘offshore office in India’, side-step pesky issues like employee benefits, etc. and pay only your certified Hadoop developer’s salary.

As an ISO27001:2013 certified and CMMiL3 assessed company, VE assures its clients of breach-proof data security and confidentiality at all times.

A certified big data professional vastly experienced in batch and steam processing along with ETL/ELT development.

An extensively experienced data engineer and database developer adept at building data-driven applications and systems.

A highly experienced Python developer with a focused and result-driven approach to build data-driven systems.

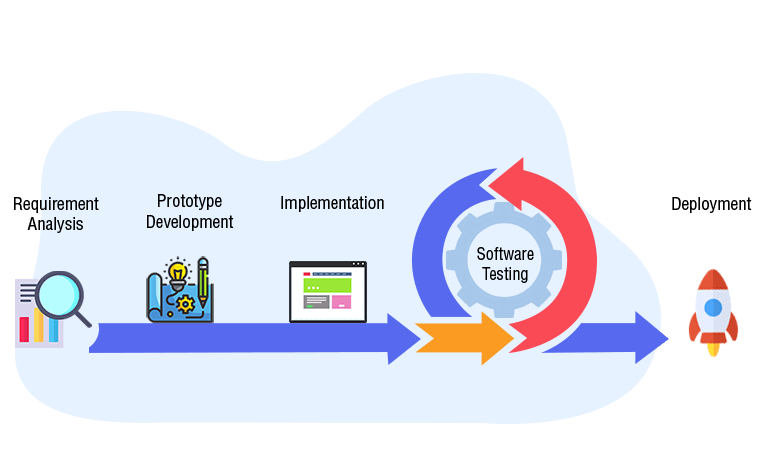

Your expert Hadoop developer understands your project requirement, business expectations and goals to deliver a future-ready Big Data solution.

VE’s certified Hadoop developer from India builds a prototype keeping the project requirements in mind and sends it across for the client’s approval.

Upon prototype approval, your Hadoop certified programmers from India begin developing and integrating the software with your existing system.

VE’s testing team thoroughly evaluates the system and informs the Hadoop development team about the issues and bugs that need to be fixed.

Once your Hadoop application developer fixes the bugs and issues in the system, the updated Hadoop solution is deployed into your active system.

We couldn't have grown as fast as we have without VE's help.

Thanks to VE, our conversion rate & sales have jumped by 200%.

My VE has helped us expand our business & gain potential clients.

Planning to hire dedicated developers in India? There is no dearth of remote developers in the market, but...

Read More >

In this fast-paced digital world, the concept of software development has drastically changed as numerous...

Read More >

If there is any industry that has come to rely heavily upon outsourcing, it is the software development industry...

Read More >