Inside the Superintelligence Timeline War: Who Gets to Predict the Future of AGI?

May 15, 2026 / 16 min read

May 15, 2026 / 20 min read / by Team VE

In early 2024, Jensen Huang stood on stage and said something that would have sounded implausible even a few years earlier. Young people, he argued, should not necessarily learn to code in the way previous generations had, because artificial intelligence would increasingly handle that layer of work. The statement was presented as a shift already underway in how software would be created.

Around the same period, Sam Altman began describing models that could carry out extended sequences of cognitive work, moving beyond single responses into multi-step problem solving. Inside Microsoft, Satya Nadella has positioned Copilot and emerging agent workflows as a reconfiguration of how software is written, deployed, and interacted with across the enterprise stack. Across these statements, the underlying message has been consistent even when the language has varied. The boundary between human-driven engineering and machine-executed work is expected to shift, and to shift soon.

The scale of adoption has added weight to that expectation. GitHub Copilot alone has moved from being an experimental tool to widespread usage, with Microsoft reporting that a significant share of developers now rely on it in their daily workflows, and internal studies suggesting measurable gains in speed for routine coding tasks. At the same time, newer systems are being demonstrated that go beyond assistance, attempting to interpret goals, generate code, test it, and iterate toward completion with limited human intervention.

Taken together, these signals have begun to form a narrative that feels increasingly difficult to ignore. The role of the software engineer, long considered central to the digital economy, is being recast as something that may not be required in the same way soon.

But there is a structural problem in how this narrative is shaping up. The expectation is moving faster than the underlying capability, and that gap is already influencing how companies hire, how products are designed, and how the future of engineering work is being imagined before the systems themselves have fully earned that position.

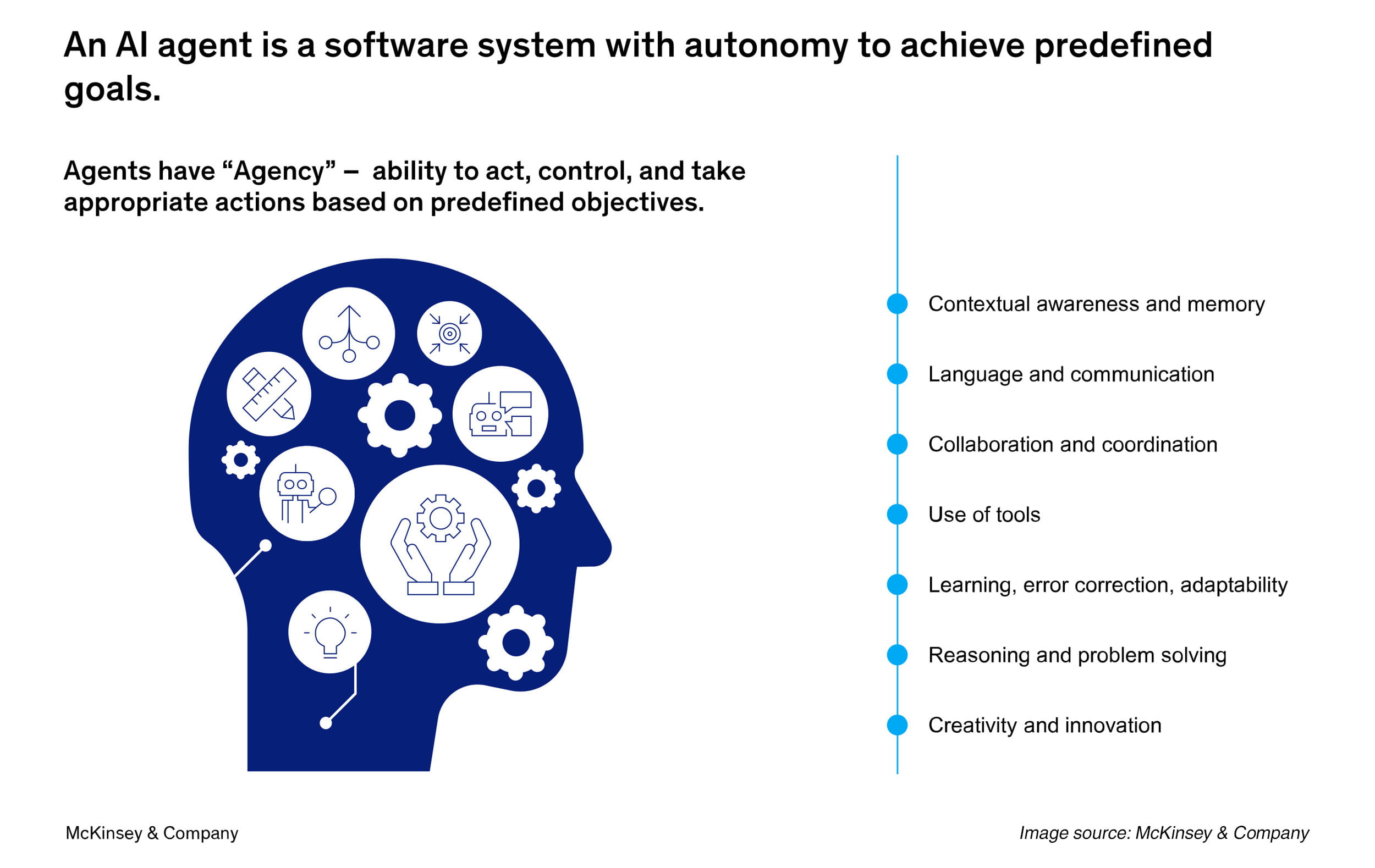

The term “AI agent” has entered the mainstream vocabulary of software development at an unusual speed, but its meaning remains unsettled. In different contexts, the same phrase is used to describe systems that range from advanced code assistants to semi-autonomous workflows capable of chaining together multiple steps toward a defined objective. The result is that progress across different layers of capability is often compressed into a single narrative, giving the impression of a more unified trajectory than it exists.

At one end of this spectrum are systems like GitHub Copilot, which operate as augmentation tools embedded directly into the developer’s workflow. These systems generate code, suggest completions, and assist with debugging in ways that reduce friction in well-defined tasks. Their impact is measurable and already visible. GitHub had reported that developers using Copilot complete coding tasks faster and spend less time on repetitive work.

Independent research has also suggested that AI-assisted coding can improve developer productivity in structured environments, particularly when problems are clearly scoped. These gains are meaningful, but they operate within a bounded frame. The system assists, but it does not own the outcome.

At the other end are what are increasingly being presented as agents in the fuller sense of the term. Systems that can take a high-level goal, break it into sub-tasks, interact with tools, generate code, test it, and iterate toward completion. Companies like Anthropic have demonstrated early versions of this approach with tool-using models that can execute sequences of actions rather than produce isolated outputs. More recently, startups such as Cognition have introduced systems like Devin, described as an “AI software engineer” capable of planning and executing development tasks across an entire workflow.

The distance between these two categories is structural. The movement from assistance to autonomy requires a shift in how systems handle context, maintain state across long interactions, and recover from failure. Under these conditions, agent-like behavior can look coherent and reliable.

However, the conditions under which real software is built are materially different. Production environments are shaped by legacy systems, incomplete documentation, conflicting requirements, and dependencies that change over time. Code is not written in isolation but as part of systems that evolve, accumulate technical debt, and require ongoing maintenance. Decisions are rarely binary as they involve trade-offs between performance, scalability, readability, and long-term cost, many of which depend on context that is not easily captured in a prompt or a single execution loop.

There is also a more subtle shift taking place in how these systems are evaluated. Traditional software engineering is judged not just by whether code works in the moment, but by how it behaves over time. Stability, maintainability, and adaptability are central to the role. AI systems, by contrast, are often evaluated on immediate output quality, on whether they can produce a working solution within a limited interaction. This difference in evaluation criteria makes early demonstrations appear more complete than they are, because the dimension of time, which is central to engineering work, is not fully represented.

None of this diminishes the significance of what has been achieved. The ability of models to generate functional code, reason across multiple steps, and interact with tools marks a real shift in the capabilities of software systems. The trajectory is clearly upward, and the pace of iteration remains high. But the way that trajectory is being described often collapses multiple stages of progress into a single narrative of replacement, rather than a more complex transition in which roles, responsibilities, and system boundaries are being redefined unevenly.

The current narrative around AI agents draws much of its force from how these systems are demonstrated. In carefully constructed environments, they appear fluid, capable, and increasingly autonomous. A prompt is given, a sequence unfolds, and the system produces code, fixes errors, and arrives at a working output in a way that feels continuous and coherent. These demonstrations are compelling because of how little friction they appear to contain.

The issue is not that these demonstrations are misleading in a direct sense. They reflect real capabilities. The problem is that they represent a narrow slice of the conditions under which software is actually built and maintained. The closer the environment moves toward controlled tasks with clear boundaries, the more coherent the system appears. As these boundaries expand, the behavior becomes less predictable.

This gap becomes visible when systems are tested outside of curated workflows. One of the more widely discussed examples is Devin, introduced by Cognition as an “AI software engineer” capable of handling end-to-end development tasks. In its launch demonstration, the system was shown planning, coding, debugging, and completing tasks with limited intervention. The presentation suggested a level of continuity that, if generalized, would mark a significant shift in how engineering work is structured.

But independent evaluations have been more uneven. Early tests shared by developers and researchers have pointed to difficulties in maintaining long chains of reasoning, handling ambiguous requirements, and recovering from intermediate failures. Tasks that extend beyond tightly scoped problems tend to expose limitations in memory, planning, and error correction. What appears stable in a short sequence begins to fragment over longer horizons.

A similar pattern can be seen in benchmark-driven systems. Anthropic’s work on tool-using models demonstrates how large language models can interact with external systems to execute multi-step tasks. In controlled settings, this produces a form of structured reasoning that resembles agency. The system can decide when to call a tool, interpret the result, and proceed to the next step. Yet even in these environments, performance depends heavily on how the task is framed. Even slight variations in instruction, unexpected outputs, or incomplete context can cause the process to degrade.

The underlying issue lies in how errors are handled and how context is maintained over time. In production environments, problems rarely present themselves in isolation. They are embedded within systems that evolve, where one change can have cascading effects across multiple components. Diagnosing and resolving such issues requires not only local reasoning, but an understanding of how different parts of the system interact over time.

This is where current AI agent systems still struggle. While they can generate plausible solutions to discrete problems, maintaining coherence across extended workflows remains difficult. Context windows, even as they expand, are still limited in nature while state management across long tasks is fragile. When failures occur, recovery often requires reintroducing context manually, effectively re-centering the system in a way that reduces its autonomy.

There is also a structural difference in how success is measured. Demonstrations tend to emphasize completion, whether a task can be solved within a single session or sequence. In real-world engineering, success is tied to durability. Code must not only work when it is written, but continue to work as dependencies change, requirements evolve, and systems scale. This temporal dimension is largely absent from most demonstrations, which means that the systems are being evaluated on a narrower definition of success than the one that governs actual engineering work.

None of this suggests that the trajectory is stagnant. On the contrary, the pace of improvement in reasoning, code generation, and tool integration has been significant. Systems are becoming more capable of handling multi-step tasks, and the boundary between assistance and partial autonomy is clearly shifting. But the way these capabilities are presented often compresses multiple stages of progress into a single narrative of readiness.

The speed at which the “AI agents replacing engineers” narrative has taken hold cannot be explained by technical progress alone. The capability gains are real, and it would be foolish to dismiss them. But the rate at which the story has travelled says as much about the market around AI as it does about the systems themselves. This is no longer a quiet technical debate about developer productivity. It has become a story shaped by capital, competition, product positioning, and the media’s preference for sharper, more dramatic versions of change.

The clearest signal is the money now surrounding the category. According to McKinsey’s latest global survey on AI, more than half of organizations already report using AI in at least one business function, while investment in generative AI continues to rise across industries. Reuters has also reported that the world’s largest technology companies are expected to spend roughly $650 billion on AI infrastructure in 2026. At that scale, the market is not merely funding tools that make work slightly faster. It is funding the possibility that entire categories of work may be reorganized.

The difference matters because narratives affect valuation. A coding assistant that helps developers complete repetitive tasks faster is useful, but it belongs to a familiar category. A system described as an “AI software engineer” belongs to a much larger one. It implies labor substitution, faster product cycles, lower delivery costs, and a different structure for technical teams. Even when the capability remains partial, the framing carries financial weight. It makes the product easier to fund, easier to explain, and easier to place inside a market already looking for the next major shift.

This is why the language around agents has become so expansive. A company building agent-based development workflows does not want to be seen as another developer-tool vendor. It wants to be seen as building a new layer of software production. When a system like Devin is introduced as an “AI software engineer,” the phrase does more than describe a product. It gives investors, buyers, journalists, and engineers a mental image they can understand immediately, even if the underlying reality is still uneven. The label compresses a complicated transition into a clean story.

Large technology companies operate under a different pressure, but the effect is similar. When Satya Nadella talks about Copilot and agent workflows as part of a broader change in how software is created, or when Sam Altman describes systems capable of carrying out longer sequences of cognitive work, they are not speaking only to developers. They are speaking to investors, enterprise buyers, policymakers, future employees, and competitors at the same time. The message is not simply that the tools are improving. The message is that the company understands where the next layer of value may form.

The media then gives this story its speed. A claim that AI may replace software engineers travels much further than the more accurate but less dramatic claim that AI is changing the distribution of effort inside engineering teams. Productivity improvement is commercially important, but replacement is culturally explosive. It creates anxiety, argument, and urgency. It gives people something to react to. That is why the most extreme version of the story often becomes the most visible one, even when the more accurate version requires more patience to explain.

This is how expectation begins to move faster than capability. The narrative does not require AI agents to be fully production-ready. It only requires them to be plausible enough for founders, investors, executives, and media platforms to organize around the possibility. Once that happens, the story starts shaping hiring plans, product roadmaps, and engineering strategy before the systems themselves have reached the maturity the headline suggests.

The point, then, is not that the replacement narrative is fabricated. It is that it has been simplified. Current AI systems are already changing software work, but the market has a strong incentive to describe that change in its most dramatic form. The real shift is more complicated: engineering work is being redistributed before it is being replaced, and the companies that understand that distinction will make better decisions than those reacting only to the loudest version of the story.

This version fixes Praveen’s concern because it avoids re-explaining demos, autonomy gaps, and reliability limitations. Those points have already been covered earlier in the article. This section now does one job: it explains why the narrative spreads faster than the capability.

If the narrative around AI agents has moved ahead of current capability, it would still be a mistake to conclude that little is changing. The shift is real, but it is uneven, and in many cases more structural than the headline claims suggest. AI is not simply producing more code. It is changing where effort sits inside the development process, what engineering judgment is used for, and how organizations will need to manage technical work.

One of the clearest changes is the redistribution of effort. Tasks that were once time-consuming but well-defined, such as writing boilerplate code, generating test cases, translating requirements into first drafts, or scanning for obvious errors, are increasingly being handled with AI support. GitHub has reported that most developers using Copilot rely on it regularly, while controlled studies have found that developers can complete certain tasks significantly faster with AI assistance. Those gains are real, but their meaning is often misunderstood. Faster generation does not remove the need for engineering judgment. It changes where that judgment is applied.

As generation becomes easier, evaluation becomes more important. Engineers spend less time constructing every line from scratch and more time reviewing, validating, integrating, and correcting machine-generated output. The constraint shifts from writing code to deciding whether the code should exist in that form at all. A function may run correctly in isolation and still be wrong for the architecture, insecure in a specific environment, expensive to maintain, or misaligned with how the product is expected to evolve. Those decisions require context, trade-off thinking, and ownership of the system over time.

The organizational implication is larger than the coding task itself. If AI increases the volume and speed of output, companies need stronger ways to control what enters production. Review processes, documentation standards, testing discipline, security checks, and accountability structures become more important, not less. A team that uses AI casually may see a short-term speed gain. A team that redesigns its engineering workflow around AI-assisted development can get the benefit without losing control of quality.

The early signs of this shift are already visible in how large technology companies speak about AI-assisted development. Microsoft has promoted Copilot as a major productivity layer across software work, but its own positioning still keeps developers responsible for validation, judgment, and final decisions. The tool accelerates parts of the workflow, while the engineer remains accountable for correctness, security, and long-term maintainability. That distinction is important because enterprise software is not judged by whether code looks impressive when it is generated. It is judged by whether systems remain stable as requirements, dependencies, users, and risks change.

At scale, the real question becomes less about whether AI can produce code and more about whether the organization can absorb AI-produced code safely. Engineering teams will need clearer ownership models, better review rituals, stronger architecture discipline, and more explicit rules about where AI output can be trusted. The manager’s role also changes. Leaders will have to decide which tasks are suitable for AI assistance, which require senior review, which should remain human-led, and where speed creates more risk than value.

This is where the workforce transition begins. The future engineering team is unlikely to be measured only by the number of developers it has or the amount of code it ships. It will be measured by how effectively humans and AI systems work together inside a controlled delivery model. Junior developers may spend more time learning through review, debugging, and integration rather than only writing from scratch. Senior engineers may become more important as system reviewers, architecture guardians, and decision-makers who understand where automated output fits and where it fails. Product managers, QA teams, security teams, and engineering leads will also be pulled closer into the development process because AI-assisted work increases the need for shared context.

The bigger change, then, is a redesign of engineering work. Some tasks become faster, some roles become more judgment-heavy while some workflows require tighter governance. The companies that benefit most will not be the ones that simply add AI tools to existing teams and hope productivity improves. They will be the ones that rebuild the operating model around faster generation, stronger review, and clearer accountability.

Understanding this distinction matters because it changes the decision companies need to make. The serious question is no longer whether AI agents can replace engineers in some broad abstract sense. The more immediate question is whether organizations are prepared for a development environment where code is easier to produce, harder to govern, and more dependent on human judgment at the points where mistakes become expensive.

For all the reasons to question the current narrative, there is still a counterpoint that is difficult to dismiss. The history of artificial intelligence, and technology more broadly, has repeated instances where progress has been underestimated in direction even when it was overestimated in timing. Systems that initially appeared brittle or narrow have, over successive iterations, crossed thresholds that changed how they were perceived and used.

The current generation of models has already proved a version of this pattern. Capabilities that were once treated as distinct, language understanding, code generation, reasoning, and tool use, are increasingly being integrated into unified systems. Benchmarks that attempt to measure this shift, such as SWE-bench, which evaluates the ability of models to resolve real GitHub issues, show steady improvement across versions, even if performance remains far from complete. The progress is uneven, but it is directional as each iteration expands the range of problems that can be addressed with partial automation.

What makes this trajectory difficult to interpret is the distinction between capability and reliability. A system may prove that it can solve a class of problems without being able to do so consistently across varied conditions. Early aviation, early computing, and even early internet systems all showed this gap. The first instance of success did not at once translate into stable, widespread deployment, but they signaled that a boundary had been crossed.

AI systems today appear to be operating in a similar space. They can solve problems that were previously out of reach, but they do so with variability that limits their use in high-stakes environments. This is where the current moment becomes more complex than a simple debate between hype and reality. The systems being developed are not static as they are being tested, deployed, and refined in environments that generate constant feedback. Each failure exposes a limitation, but it also provides data that can be used to improve the next iteration. Over time, this process can convert isolated capability into dependable behavior.

There is also a shift in how organizations are learning to work with these systems. Early interactions were based on direct prompting and one-off outputs. More recent approaches involve chaining tasks, keeping context, and integrating models into broader workflows. These changes are not always visible in public demonstrations, but they alter how systems are used in practice. As workflows evolve, the effective capability of the system can increase even without a fundamental breakthrough in the underlying model.

From this perspective, the argument made by optimists is that the trajectory is closer to a tipping point than it appears. The gap between what is possible in isolated cases and what is reliable in production may narrow faster than expected, particularly as engineering effort shifts toward making these systems more stable, more predictable, and better integrated into real environments.

This possibility complicates the critique. If the optimists are broadly correct, then what appears today as overstatement could be an attempt to describe a transition that is still in its initial stages. The behaviors shaped by that narrative, changes in hiring, investment, and system design, would not be premature. They would be responses to a trajectory that is already underway, even if it is not yet fully visible in its final form.

This does not resolve the tension. It makes it harder to ignore. Because it leaves two interpretations in place at the same time. The narrative may be compressing a timeline that will take longer to unfold, leading to decisions that assume more maturity than exists. Or it may be capturing a trajectory that is advancing faster than conventional expectations, in which case the greater risk lies in preparing too slowly.

The debate over whether AI agents will replace software engineers may remain unsettled for some time. When Jensen Huang suggests that coding may no longer be a primary skill, when Sam Altman speaks about systems that can carry out extended sequences of work, and when Satya Nadella positions AI as a new layer across the software stack, they are not just offering predictions. They are setting expectations that ripple through hiring plans, product decisions, and how engineering itself is organized. The question is no longer whether these timelines are correct in detail, but it is how much their articulation is already influencing how the future is being built.

May 15, 2026 / 16 min read

May 13, 2026 / 24 min read

May 13, 2026 / 20 min read